Does ChatGPT Make Novice Code Better — and What Should Interviews Test Now?

Yes—but it's not the win people think it is.

If you measure "better code" as fewer style violations and lower complexity, then ChatGPT helps. If you measure "better code" as correctness under constraints, long-term maintainability, and sound judgment, the answer is more complicated.

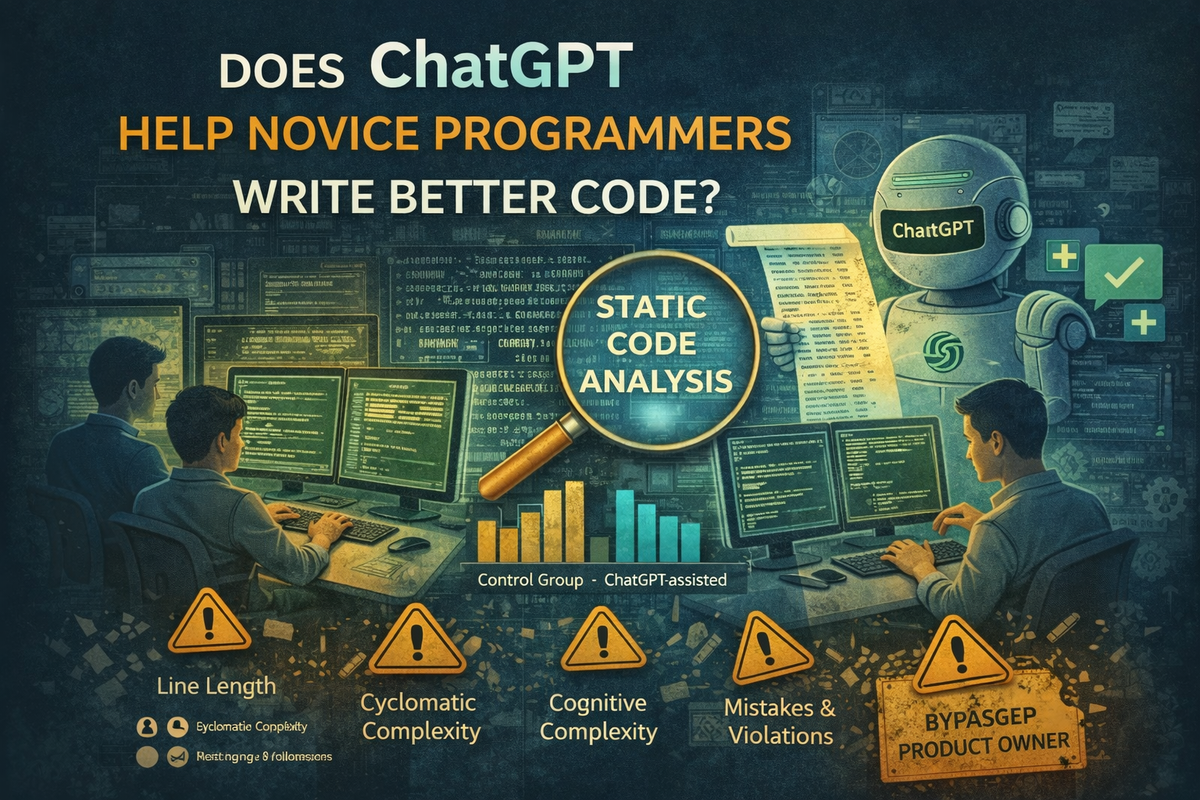

A 2024 IEEE Access study looked at novice programmers in an intro Java course and compared student submissions with and without ChatGPT assistance. Using static analysis (Checkstyle + SonarQube), they found the ChatGPT-assisted group produced code with fewer coding convention violations and lower cyclomatic and cognitive complexity.

That's real. But the interesting takeaway isn't "AI makes students code better."

It's this: AI improves the exact metrics that are easiest to measure—which can trick teams into thinking quality improved more than it did.

I'm attaching the full paper in this post so you can read it end-to-end. This article is the practical distillation: what improved, what didn't, and what this should change about interviews and team processes.

TL;DR

- Novices using ChatGPT had fewer violations of Java coding conventions and lower cyclomatic and cognitive complexity.

- The biggest improvements showed up in specific rule categories: line length, final parameters, and several structure/syntax rules.

- The "hardest" topics for both groups were Collections, File I/O & Streams, and Object-Oriented Programming(most violations).

- The paper cautions that static analysis is an incomplete proxy for "high-quality maintainable code," and the sample is limited to novices in a single course setting.

- Practical implication: AI pushes teams toward surface-level cleanliness; senior engineers must enforce invariants, tests, and architecture boundaries so "clean-looking" doesn't become "quietly wrong."

Context and Caveats

Studies in CS education often have modest sample sizes (one course, one university). Treat findings like this as directional signals, not universal truths about how the entire industry behaves.

That said, the authors found consistent, statistically significant differences across several static-analysis metrics. The direction was consistent: students who used ChatGPT produced code that was cleaner and less complex according to static analysis.

This matches what I've seen in practice: for junior developers, ChatGPT often helps with style, boilerplate, and basic structure. It's like an untiring junior engineer pushing toward reasonable conventions.

Where we need to be careful:

- ChatGPT has no real awareness of your architecture—your layers, orchestrators, state machines, privacy/compliance constraints, or system invariants. It optimizes for "looks good in isolation," not "fits our system."

- It's weakest exactly where senior engineers earn their keep: subtle concurrency behavior, performance tradeoffs, security, and complex state. It can sound clean and convincing while being wrong.

- It can slow you down when you already know what you want: explaining constraints, reviewing output, and fixing the last 10% can cost more than writing it yourself.

- Over-reliance can quietly dull your edge: less API "muscle memory," less time reading primary docs, and a subtle drift from "best practice" to "whatever the model believes is standard."

My summary: For junior/mid developers, tools like ChatGPT likely improve code style and reduce measured complexity on average. For senior-level work, it's useful for scaffolding and drafts—but it doesn't replace design, architecture, or deep debugging. Treat it like a noisy junior: helpful, but always needs code review.

What the Study Actually Did

Two cohorts of part-time undergrads in an intro Java course (no prior Java experience). One group had no ChatGPT access (pre-ChatGPT era), one group could choose to use ChatGPT.

Student submissions were analyzed using:

- Checkstyle to detect violations of a ruleset based on Java coding conventions

- SonarQube to compute cognitive complexity

- Cyclomatic complexity via static analysis

They used a non-parametric test (Wilcoxon Rank Sum) because distributions were skewed.

The point: this isn't a vibes-based argument. It's a structured comparison using explicit metrics and statistical testing.

What Improved (and Why It's Plausible)

Fewer coding-convention violations

The ChatGPT-assisted group showed fewer violations across multiple coding rules, with strong differences in several categories.

This matches what LLMs are good at: formatting, consistent structure, conventional naming patterns, and "idiomatic-looking" scaffolding.

Lower cyclomatic complexity

The ChatGPT group produced code with lower cyclomatic complexity (statistically significant difference).

Also plausible: LLMs often generate solutions with fewer nested conditionals or push complexity into helper methods.

Lower cognitive complexity—but the effect may be small

Cognitive complexity was also lower for the ChatGPT group, but the authors note the difference in medians was small and treat it as potentially negligible, requiring more research.

That nuance matters: "AI wrote it" does not automatically mean "humans will understand it."

Where the Violations Clustered

Both groups struggled most with exercises involving:

- Collections

- File I/O and Streams

- Object-Oriented Programming

This is useful beyond education. These areas are where production systems tend to accumulate edge cases and resource handling pitfalls, leaky abstractions, poor API design decisions, and incorrect assumptions about ownership and lifetimes.

If you're designing onboarding or training plans, this is a hint: these are high-leverage topics to reinforce early.

The Part That Matters: Static Analysis Is Not "Quality"

The paper explicitly calls out a construct validity concern: measuring code quality via static analysis rules and complexity metrics may not capture the full nuance of maintainable, high-quality code.

That becomes a real industry failure mode: "The code looks clean, so we trust it."

LLMs can produce code that is convention-compliant, lower in measured complexity, and "clean" by static tools—while still being subtly incorrect, brittle under real system constraints, poorly aligned with your architecture boundaries, and risky under concurrency, cancellation, retries, and partial failure.

Static tools rarely tell you if the code is correct in your system.

What This Should Change About Interviews

If candidates can use AI (and they can), then interviews that reward memorizing APIs, writing boilerplate from scratch under pressure, and syntax trivia are increasingly misaligned with the job.

A better evaluation targets things that matter when shipping:

- Reasoning under constraints: What fails in production and why?

- Debugging: Can you isolate failure modes?

- Tradeoffs: Why this design, not that one?

- Boundaries: Where do invariants live and how are they enforced?

- Change safety: How do you ship without breaking users?

AI can help type code. It can't reliably make correct architectural calls for your product.

What This Should Change About Team Process

Treat AI as a productivity multiplier, not a quality guarantee.

This study suggests AI can help novices produce "cleaner-looking" code by static standards. So for real teams, the safety move is structural:

1. Put invariants in tests, not in hopes

- Unit tests for logic

- Integration tests for boundaries

- Property-based tests where justified

2. Separate "surface" from "structure" in PR review

Surface: lint, naming, formatting, low-effort cleanup

Structure: ownership boundaries, error-handling policy, cancellation behavior, retry/backoff policy, observability (logs/metrics), and failure-mode coverage

3. Force the "assumptions" question

Ask in review: "What assumptions does this code rely on?"

LLMs are confident. Incorrect assumptions are expensive.

A Senior AI Workflow That Survives Reality

- Use AI for scaffolding and refactors only after you define constraints: inputs/outputs, invariants, error modes, concurrency expectations, performance constraints

- Add automated gates: lint + formatting, unit tests, a small set of boundary tests (network failure, cancellation, retries)

- In review, focus 80% on: invariants, correctness, maintainability under change

- Use AI again—but for review prompts:

- "List edge cases I might be missing"

- "What are failure modes if this returns out of order?"

- "What assumptions does this code rely on?"

This preserves AI's upside while keeping humans responsible for judgment.

Limitations (Worth Stating Plainly)

The paper is clear about its limits:

- Small sample size, novice participants

- Specific educational context

- Static analysis as an incomplete proxy

So don't oversell it as "AI makes developers better."

The more accurate claim is: AI improves measurable cleanliness; teams must evolve what they measure and reward.

Reference

Haindl, P. & Weinberger, G. (2024), "Does ChatGPT Help Novice Programmers Write Better Code? Results From Static Code Analysis," IEEE Access, Vol. 12, pp. 114146-114156.

Closing

The study confirms something practitioners already suspect: AI coding assistants are good at making code look better. Fewer lint violations. Shorter methods. Consistent naming. These are real improvements, and they compound over a codebase.

But "looks better" and "is better" aren't the same thing.

The gap between them is where bugs hide, where architectural debt accumulates, and where production incidents originate. Static analysis catches formatting. It doesn't catch "this retry logic will hammer the database during an outage" or "this state machine has an unreachable branch that matters."

For teams adopting AI tools, the lesson isn't "don't use them." It's: recalibrate what you trust and what you verify. Let AI handle the surface. Keep humans responsible for the structure.

Because the most dangerous code isn't the code that looks bad. It's the code that looks clean and is quietly wrong.